In the past I wrote an article about Non-Uniform Memory Access (NUMA), the system architecture that is used with multiprocessor designs to connect CPU and RAM is the same bus, the result is low latency and great performance. Local memory is the memory that is on the same node as the CPU currently running the thread. Every couple CPU/RAM is called NUMA Nodes.

The system attempts to improve performance by scheduling threads on processors that are in the same node as the memory being used. It attempts to satisfy memory-allocation requests from within the node, but will allocate memory from other nodes if necessary. It also provides an API to make the topology of the system available to applications. You can improve the performance of your applications by using the NUMA functions to optimize scheduling and memory usage.

If you want learn more, check the full article: Hyper-V Series: Configure NUMA

Virtual Machine Migration

NUMA is good in majority of cases but could be dangerous when we move a virtual machine from an host to another one, in particular when the two servers are different from hardware configuration prospective. The risk is to under-estimate or over-estimate the resources that each single VM can request to Hyper-V host and this is not good for performance.

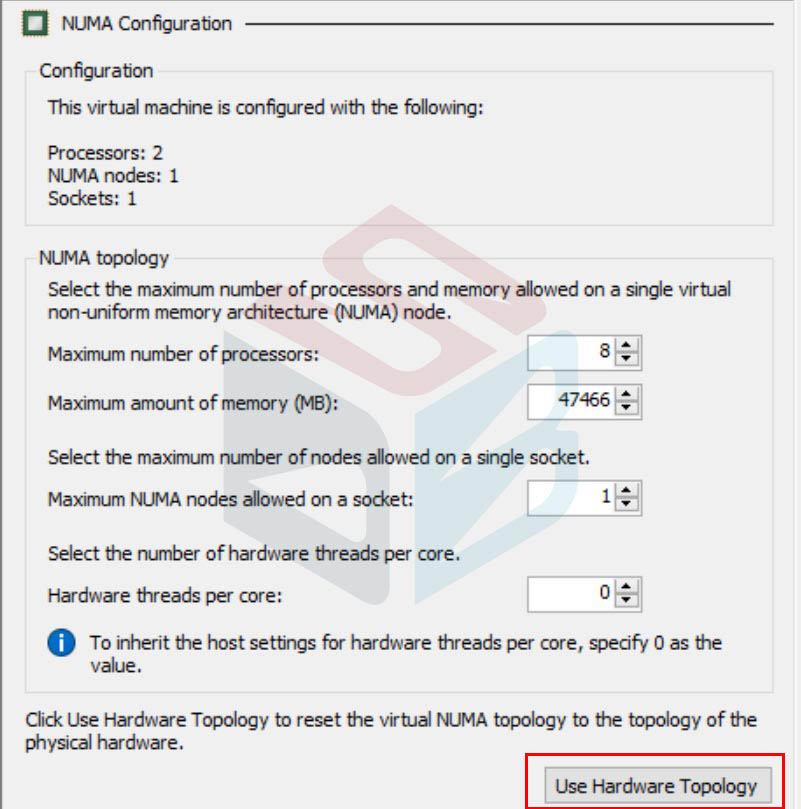

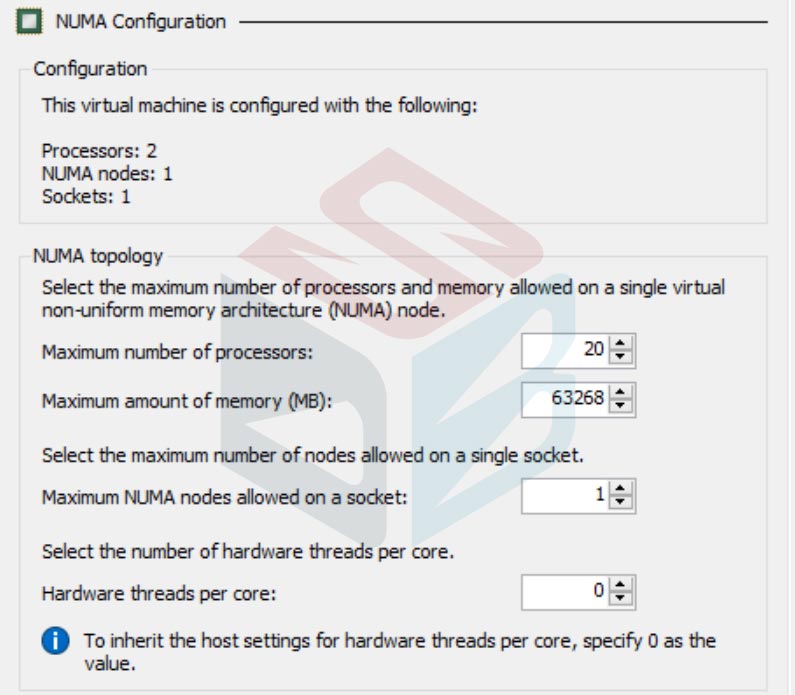

In this case, I had virtual machine into a host with x2 CPU based on Intel Gold Series 52xx with 128GB RAM; the NUMA was configured as showed in figure 2.

I moved the object to host with x2 CPU based on Intel Gold Series 62xx with 768GB RAM. After migration the configuration remained the same so, as I said, the performance can impact to the guest machine. To fix this is necessary turn-off the VM and edit the vCPU configuration in Hyper-V Manager, or Failover Cluster Manager, and clicking on button Use Hardware Topology.

The result is the hardware update – figure 3 – based on new host configuration.

Conclusion

Is very important check this configuration when you move virtual machines from an host to another but this is necessary only in these scenarios:

- From standalone host to Cluster

- From Cluster to standalone host

- From standalone host to standalone host

Between hosts of same cluster is not necessary because each node must have the same configuration to be validated, in order to be used in production.

#DBS